For the past three years, enterprise AI has been a mess. Companies have stitched together incompatible tools like digital Frankensteins: OpenAI's GPT-4 for chat, Anthropic's Claude for reasoning, AWS for hosting, Snowflake for data, Salesforce for CRM, and a dozen other point solutions filling the gaps. The result? Expensive, brittle systems that work until they don't, with data flowing through APIs like water through a leaky pipe.

Google has watched this chaos unfold and decided the solution isn't to build a better chatbot. It's to swallow the entire problem whole. At Cloud Next 2026, the company unveiled what it calls the "full-stack AI platform" — a deliberately audacious attempt to offer cloud infrastructure, frontier models, data platforms, workspace tools, and agent frameworks in one integrated system. The pitch is simple: stop cobbling, start building. And the subtext is equally clear: nobody else can offer this because nobody else owns the entire stack.

The Everything Platform

Google's bet rests on a structural claim that sounds like corporate bragging but actually has teeth. Andi Gutmans, a Google Cloud VP, put it plainly in an interview with The Register: "No competitor currently combines cloud computing infrastructure, frontier AI models, and a data platform under one roof." He's not wrong — at least not technically. Amazon has the best cloud infrastructure but relies on Anthropic and open-source models for AI. Microsoft has a strong enterprise footprint and OpenAI partnership but doesn't own the models or the cloud at Google's scale. OpenAI has the models but no cloud, no data platform, no enterprise suite.

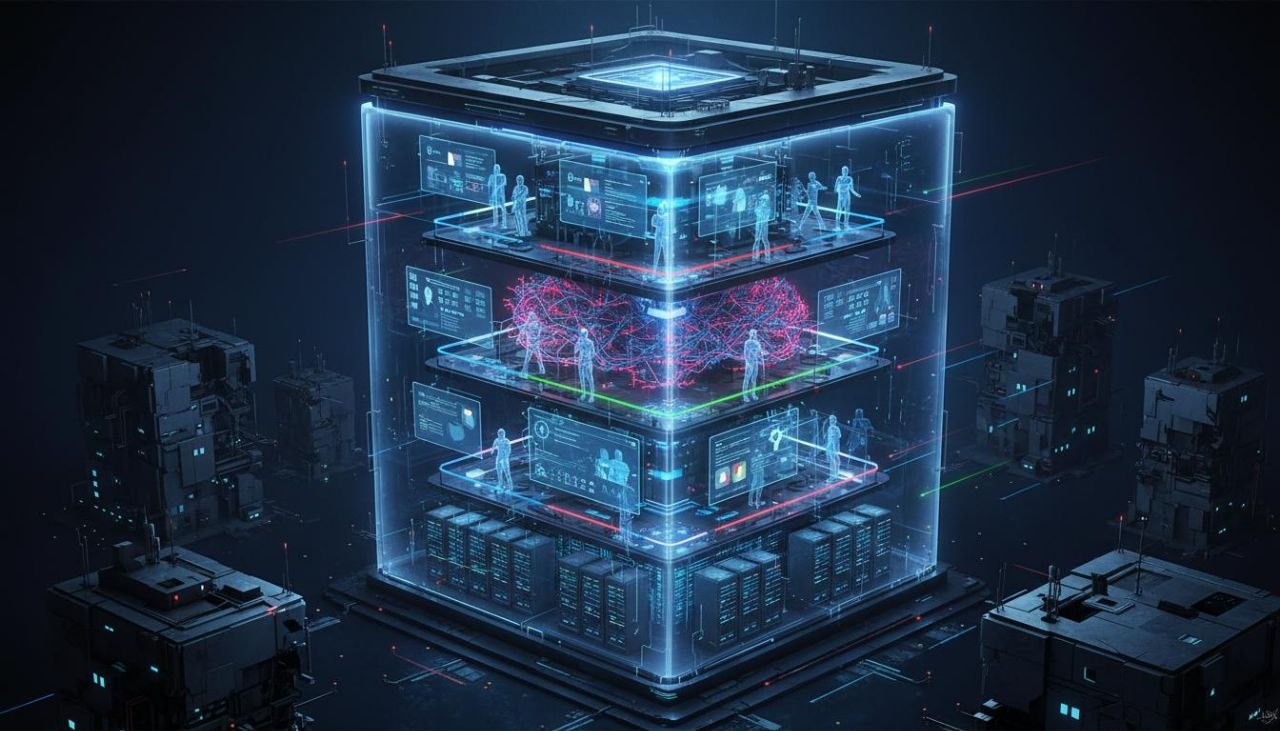

Google, by contrast, can offer all of it. The new platform, which Google is branding under the Gemini Enterprise umbrella, combines several previously separate products into what the company calls a "unified surface." The AI Hypercomputer — Google's purpose-built infrastructure for training and serving models — serves as the foundation. On top of that sits the model layer, where Gemini variants handle everything from reasoning to multimodal tasks. Then comes the data platform, BigQuery, which Google has deeply integrated with AI workflows. Finally, the application layer includes Workspace Studio, which embeds agents directly into Gmail, Docs, Sheets, and Meet.

The integration is deliberately tight. A user can, in theory, ask Gemini to analyze a sales dataset in BigQuery, generate insights, draft a presentation in Google Slides, and schedule a follow-up meeting — all without leaving the Google ecosystem or exporting data to a third party. Whether this works as smoothly as the demos suggest is another question, but the vision is coherent in a way that Microsoft's Copilot-plus-Office or Amazon's Bedrock-plus-Connect offerings are not.

The Agent Play

The most technically interesting piece of Google's announcement is the A2A protocol — Agent-to-Agent communication — which has already been adopted by over 150 organizations. This matters because agents are only useful if they can talk to each other. An agent that can read your email but can't coordinate with an agent that manages your calendar is just a slightly smarter search tool.

Google is positioning A2A as an open standard, which is a clever strategic move. If it becomes the default protocol for agent interoperability, Google effectively controls the routing layer for AI automation — the equivalent of owning HTTP in the early web. The company has also introduced Project Mariner, a browser-based agent that can navigate websites, fill forms, and perform tasks on external systems. This is Google's answer to the reality that most enterprise data and workflows live outside Google's walled garden. Mariner is the bridge.

The Workspace Studio integration is where Google expects to win near-term adoption. Rather than selling AI as a separate product, Google is embedding it directly into tools that hundreds of millions of people already use. The AI co-author in Word documents, announced alongside the broader platform, isn't revolutionary technology — it's revolutionary distribution. Microsoft is trying the same thing with Copilot, but Google's integration is deeper because it controls the entire stack beneath the application layer.

Embracing Competitors to Beat Them

Perhaps the most strategically interesting aspect of Google's approach is that its platform isn't strictly limited to Google models. The company has been careful to emphasize that customers can run Claude, Llama, Mistral, and other frontier models on Google Cloud infrastructure. On the surface, this looks like openness. In practice, it's a land grab.

By positioning Google Cloud as the neutral platform for all AI, Google hopes to become the infrastructure layer regardless of which models win. If Claude becomes the default coding assistant, Google still gets the compute revenue. If Llama dominates open-source deployments, Google gets the hosting revenue. The models may change; the infrastructure is sticky. It's the same playbook that made AWS the default cloud regardless of which applications ran on top of it.

The risk, of course, is that Google undermines its own model strategy by making it too easy to use competitors. Sundar Pichai has bet Google's future on AI, and the company is processing more than 16 billion tokens per minute through its first-party models. That's a massive investment to undercut with easy access to Claude. The bet is that Google's models are good enough — and the integration tight enough — that most customers will choose the path of least resistance and use Gemini within the Google stack rather than importing alternatives.

The End of the Pilot Era

Forrester's analysis of Cloud Next framed Google's strategy as "the end of the AI pilot era." For three years, enterprises have been running AI experiments — chatbots here, summarization tools there, occasional code generation pilots. The results have been mixed. Some pilots showed promise but couldn't scale. Others scaled but couldn't integrate. Most foundered on the shoals of data security, compliance, and the simple reality that AI systems are harder to productionize than vendors promised.

Google is betting that the problem isn't AI's capabilities but the fragmentation of its delivery. When a company has to manage separate contracts, data flows, security models, and billing systems for each AI tool, the operational overhead overwhelms the productivity gains. Google's answer is to collapse everything into one system with one security model, one data governance framework, and one bill.

The timing is opportune. Enterprise AI spending is accelerating — estimates suggest $700 billion across the industry in 2026 — but frustration with integration is also rising. CIOs are tired of being integration engineers for vendor ecosystems. Google is offering them an escape hatch: stop stitching, start using.

🔥 Our Hot Take

Here's what Google won't say out loud: this is the same playbook they've run for two decades, and the results have been mixed at best. Google builds comprehensive, deeply integrated platforms that are technically impressive and strategically coherent — Search, Android, Chrome, Workspace — and then struggles with the messy, relationship-heavy work of enterprise sales and support.

AWS didn't win cloud because it was the most integrated platform. It won because it launched first, iterated fast, and treated every enterprise customer like they mattered. Microsoft didn't win office productivity because Word was the best word processor. It won because enterprises already used Windows, and the bundling was irresistible. Google's integration advantage is real, but history suggests it's not sufficient.

The deeper question is whether the "full stack" approach even makes sense in AI. The technology is evolving so fast that locking into one vendor's entire ecosystem may be a mistake. Today's frontier model is tomorrow's baseline. The company that wins reasoning may not win multimodal. The best coding assistant may not be the best research tool. Google's bet is that integration matters more than best-of-breed capabilities — that a good enough model deeply integrated into your workflow beats a better model that lives in a separate tab.

For some use cases, that bet is probably right. For others, it's probably wrong. The companies that figure out which is which — and where to integrate versus where to specialize — will win the next phase of enterprise AI. Google has placed its chips on the table. The roll of the dice is coming.