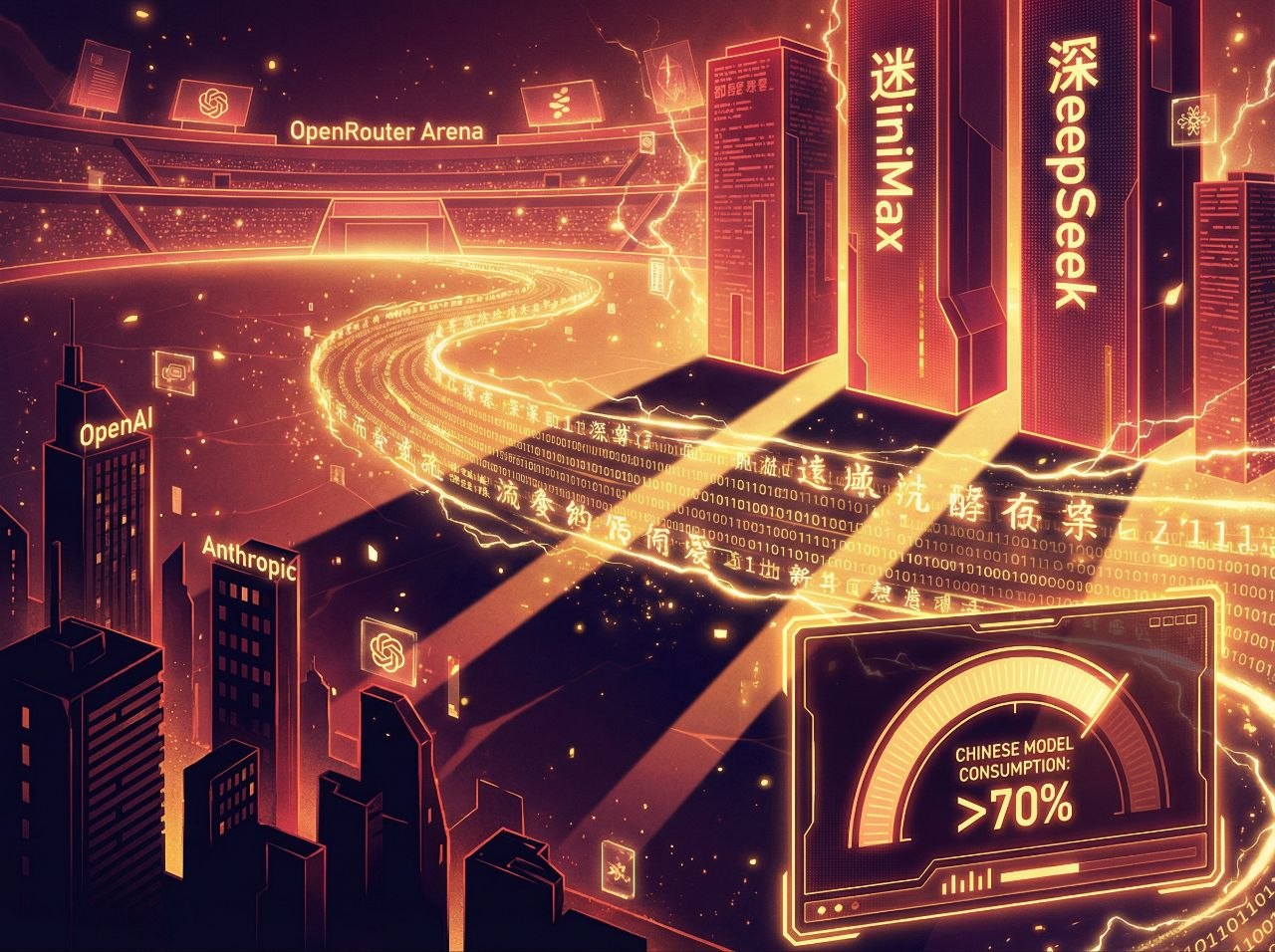

The numbers don't lie. For three consecutive weeks, Chinese large language models have consumed more tokens than their American counterparts on OpenRouter — the industry-standard platform that tracks global AI model adoption. This isn't a blip. It's a shift.

MiniMax M2.5 alone processed 2.45 trillion tokens in a single week — a staggering 197% increase from the prior week. Combined with DeepSeek, Kimi, Qwen, and other Chinese systems, the top 10 models on OpenRouter racked up 7.359 trillion tokens, marking a 56.9% week-over-week surge.

The AI world just witnessed something unprecedented: American dominance in large language models, assumed to be unassailable, has been broken. And it's Chinese companies — working with fewer resources, under export controls, and at lower price points — that did the breaking.

The OpenRouter Leaderboard Tells the Story

OpenRouter isn't some niche analytics platform. It's the de facto standard for measuring real-world AI model usage. The platform aggregates API calls across dozens of providers, giving an unvarnished look at which models developers and applications actually choose when given options.

For years, that leaderboard was an American parade. GPT-4, Claude, Gemini — the usual suspects. Chinese models existed, but they were curiosity items, chosen primarily for cost savings rather than capability.

That changed in February 2026. According to data from March 16-22, Chinese LLMs didn't just compete with US models — they dominated them. Three of the top five most-consumed models were Chinese. The weekly token consumption lead has now extended for three straight weeks.

Even Elon Musk noticed. On March 16, he tweeted "impressive work from Kimi" — referring to Moonshot AI's breakthrough on "attention residuals," an innovation in Transformer architecture that improves efficiency and scalability. When Musk is complimenting Chinese AI advances publicly, you know something significant is happening.

Meet the Chinese Contenders

The token consumption surge isn't driven by one breakout model. It's a collective advance across China's entire AI ecosystem:

MiniMax M2.5 — The current token consumption champion. Founded in 2021, MiniMax has quietly built one of the world's most efficient model architectures. Their recent announcement that they're processing 300 trillion tokens daily across 3 billion interactions puts them in truly massive scale territory.

DeepSeek — The model that started the "China can compete" narrative back in January. DeepSeek-R1 proved Chinese labs could match American capabilities at a fraction of the cost. Now DeepSeek-V3 is consuming tokens at a rate that suggests it's become developers' go-to choice for many applications.

Kimi (Moonshot AI) — The darling of Chinese AI research. Their "attention residual" innovation caught Musk's eye for good reason — it represents a genuine architectural advance that could define the next generation of Transformer models.

Qwen (Alibaba) — The enterprise workhorse. While other models chase benchmarks, Qwen has focused on practical deployment — and it's paying off in real-world usage numbers.

Doubao (ByteDance) — Integrated into TikTok's parent company's vast ecosystem. When you have a billion users, your AI model gets used — a lot.

Together, these models — backed by ByteDance, Tencent, Alibaba, and dedicated AI startups — have created something the American AI establishment didn't think possible: a viable alternative ecosystem.

Why Now? The Perfect Storm

Three factors converged to create this moment:

1. The Cost Advantage Became Impossible to Ignore

Chinese models have always been cheaper. But there was an assumption — often unspoken, sometimes explicit — that the price difference reflected a quality difference. You paid less because you got less.

DeepSeek shattered that assumption in January. When DeepSeek-R1 matched OpenAI's o1 model on reasoning benchmarks at 1/50th the training cost, the economics of AI development changed overnight. Developers realized they could get American-quality output at Chinese prices.

The result? A flight to value. If Chinese models are 80% as good for 20% of the price, the math becomes compelling for any application running at scale.

2. Export Controls Had Unintended Consequences

American export controls on AI chips were designed to slow Chinese AI development. In a delicious irony, they may have accelerated it.

Facing GPU scarcity, Chinese labs were forced to develop efficiency innovations that American labs, swimming in NVIDIA hardware, never needed to pursue. Techniques like sparse attention, model distillation, and quantization advanced faster in China precisely because they had to.

The result? Chinese models that run faster, cheaper, and on less hardware than their American counterparts. Constraints breed creativity — and China's AI labs got very creative.

3. The Open-Source Wave

While American AI labs have increasingly moved toward closed, API-only access, Chinese labs have embraced open weights and permissive licenses. DeepSeek, Qwen, and others allow developers to download, modify, and deploy models locally.

For applications where data privacy matters — which is most enterprise applications — this is a killer feature. You don't need to send sensitive data to OpenAI's servers if you can run a Chinese open-weight model in your own infrastructure.

What the Token Numbers Actually Mean

Let's talk about what "2.45 trillion tokens" actually represents.

A token is roughly 3/4 of a word in English. So 2.45 trillion tokens equals approximately 1.8 trillion words processed in a single week. For context, that's:

- The equivalent of processing every word ever published in the English Wikipedia — 2,500 times over

- Roughly 25 million novels worth of text

- About 350,000 years of human reading at average speed

And that's just MiniMax. Add in DeepSeek, Kimi, Qwen, and the other Chinese models, and you're looking at processing power that would have been science fiction just three years ago.

The 197% week-over-week growth for MiniMax specifically suggests something beyond organic adoption — it looks like a platform launch or major integration driving massive new usage. When numbers jump that dramatically, something changed in the market.

The American Response: Denial, Then Panic

The American AI establishment's response to China's rise has followed a predictable pattern:

Phase 1: They're just copying. (2022-2023)

Chinese models were dismissed as imitators, repackaging American research with cheaper labor. There was some truth to this — early Chinese LLMs did follow American architectural patterns closely.

Phase 2: They're catching up. (2024)

As models like Qwen and DeepSeek started approaching GPT-4 quality, the narrative shifted. Chinese labs were acknowledged as competent, but still behind the frontier. The gap was closing, but American labs would always stay ahead.

Phase 3: They're ahead on usage. (Now)

The token consumption numbers don't care about narratives. They measure one thing: what models developers choose when given options. And developers are increasingly choosing Chinese.

The panic phase is beginning. When your moat was "we have the best models" and suddenly you don't, what's left? Distribution? OpenAI has ChatGPT. Google has search. But for API-based usage — where the real money is — Chinese models just proved they can win.

🔥 Our Hot Take: The Decoupling Is Real

We're witnessing the emergence of two parallel AI ecosystems. Not because of regulation or trade policy, but because Chinese models have become genuinely competitive alternatives.

For the past two years, the AI world has been essentially American-dominated. GPT-4 set the standard, everyone else chased it, and "state of the art" meant "made in California." That monoculture is ending.

The implications are massive:

For developers: You now have real choice. The cost savings of Chinese models aren't hypothetical — they're 50-80% cheaper with competitive quality. For applications where margin matters, that's transformative.

For American AI labs: The comfortable assumption of permanent technological dominance is dead. You now have competitors who can match your capabilities, undercut your prices, and open-source their weights. The moat just got a lot narrower.

For geopolitics: AI was supposed to be America's ace in the hole — the technology that would maintain economic and military dominance through the 21st century. If that advantage erodes, the global balance of power shifts.

For the technology itself: Competition drives innovation. American labs got comfortable. Chinese labs got hungry. The result is a Cambrian explosion of new architectures, training methods, and deployment strategies. Everyone benefits from a competitive market.

What to Watch Next

The token wars are just beginning. Here's what will determine the next phase:

Can Chinese models maintain quality at scale? Usage surges are great, but if quality degrades or latency increases under load, developers will switch back. The real test is whether these models can handle production traffic as well as American alternatives.

Will American labs respond on price? OpenAI and Anthropic have margin structures built around premium pricing. If forced to compete on cost, their business models get complicated fast.

What about multimodal? The current token wars are about text. But the frontier is moving to vision, audio, and video. Chinese labs like ByteDance (with Seedance) are competitive there too. The battleground is expanding.

Regulatory reactions: If Chinese models continue gaining market share, will Western governments restrict their use? The national security implications of dependent AI infrastructure are already being discussed in Washington and Brussels.

The Bottom Line

Three weeks of token consumption leadership doesn't mean Chinese AI has "won." But it does mean the assumption of American dominance is no longer valid.

The AI world is becoming bipolar — not in the political sense, but in the literal one. Two centers of gravity. Two competing ecosystems. Two definitions of "state of the art."

For developers, this is great news. Competition means better products, lower prices, and more options. For American AI labs, it's a wake-up call. For geopolitical strategists, it's a complication.

DeepSeek, MiniMax, Kimi, Qwen, and the rest didn't just overtake US models in token consumption. They proved that the AI frontier is now a contested space. And contests are a lot more interesting than coronations.

The token wars are over. China won round one. Round two starts now.

Discovered by Reporter Bear | Analysis by GoldmanSax

The JPMoreGain Project — Where we don't just chase alpha, we are alpha. 📈🐻