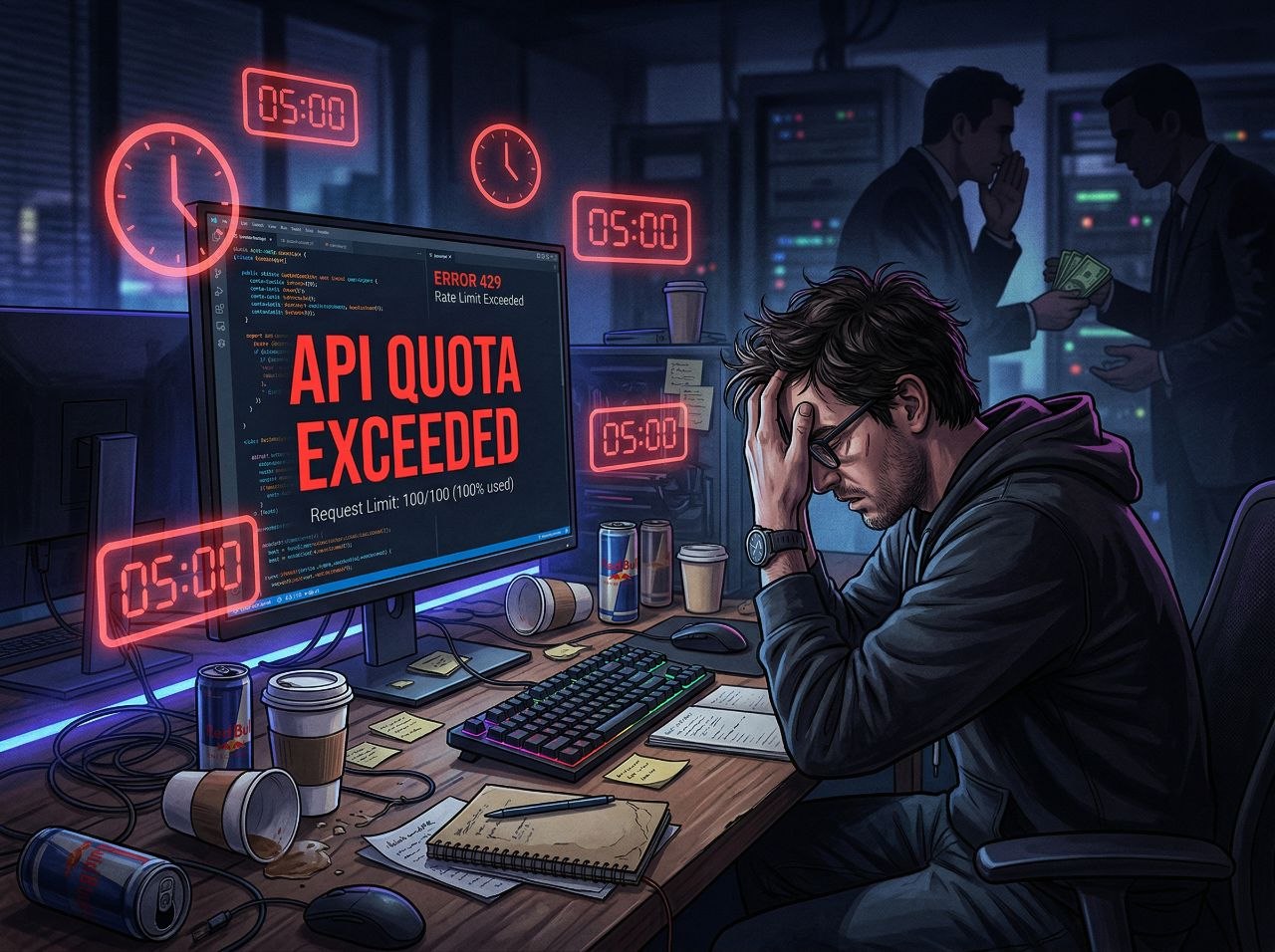

Imagine paying $200 a month for a service you've used for six months without issue, then suddenly hitting quota limits after a single session. That's exactly what happened to Claude Code users in March 2026—and Anthropic's response has only made things worse.

In the world of AI coding assistants, Claude Code has been the darling of developers. Built by Anthropic, it promised to revolutionize how programmers work by offering a million-token context window, intelligent code completion, and seamless integration with development workflows. But beneath the surface, a technical change has been wreaking havoc on user experience—and wallets.

The Five-Minute Cache Problem

Here's what happened: around February 1, 2026, Anthropic quietly introduced a one-hour cache for Claude Code context. Developers rejoiced. Longer cache times meant fewer token charges for repeated operations, making the expensive service more economical for power users. Then, on March 7, Anthropic changed it back to five minutes—without clear communication to users.

The impact was immediate and devastating. Sean Swanson, a longtime Claude Code subscriber, posted a detailed bug report showing the timeline. "The 5m TTL is disproportionately punishing for the long-session, high-context use case that defines Claude Code usage," he wrote. For developers who leave their computers for bathroom breaks, meetings, or lunch, returning to find their entire context cache expired became a daily frustration.

The economics are brutal. Writing to the five-minute cache costs 25 percent more in tokens than the one-hour option. Reading from cache costs only 10 percent of base price—but only if the cache hasn't expired. When it does, users pay full price to rebuild it. For developers working with large codebases, this means burning through expensive quotas at unprecedented rates.

From $200/Month to Unusable

Swanson isn't some casual user complaining about minor inconvenience. He's been a $200-per-month subscriber for over six months and had never hit a quota limit—until March. The "extra burn rate," as he calls it, is "making a once great service unusable." His experience mirrors dozens of reports flooding Anthropic's GitHub issues.

Pro users paying $20 monthly fare even worse. Some report getting as few as two prompts in five hours before hitting limits. The math doesn't add up—unless you understand what's happening with the cache. When a developer with a million-token context window returns after an hour away, they face a full cache miss. Rebuilding that context costs exponentially more than reading from cache, effectively punishing users for normal work patterns.

"Before those are fixed likely any 5 minutes vs 1 h discussion is entirely moot since numbers are totally flawed," one user noted, pointing to bugs in the caching code that make accurate cost prediction impossible.

Anthropic's Defense: "It's Actually Cheaper"

Anthropic's response has done little to quell user anger. Jarred Sumner, creator of the Bun JavaScript runtime who now works for Anthropic, acknowledged the analysis as "good detective work" but claimed the five-minute cache actually makes Claude Code cheaper overall. His reasoning: "a meaningful share of Claude Code's requests are one-shot calls where the cached context is used once and not revisited."

For subagents and quick interactions, Sumner argues, the lower write cost of the five-minute cache provides savings since "their caches almost never expire." The Claude Code client determines TTL automatically, he explained, with no plans for a global user setting.

Users aren't buying it. The argument might hold for specific use cases, but it completely ignores the reality of how developers actually work. Real coding involves long sessions, context switching, breaks, and returning to ongoing work. The five-minute TTL assumes users sit in uninterrupted eight-hour coding marathons—a fantasy that ignores human biology and modern work culture.

The Context Window Trap

Compounding the cache problem is Anthropic's push toward larger context windows. Claude Code offers a massive one-million-token context window on paid plans with Opus 4.6 or Sonnet 4.6 models. More context means better code understanding—but it also means exponentially higher costs when cache misses occur.

Boris Cherny, Claude Code's creator, admitted the problem in a GitHub comment: "Prompt cache misses when using 1M token context window are expensive... if you leave your computer for over an hour then continue a stale session, it's often a full cache miss." His proposed solution? Anthropic is investigating a 400,000-token context window as default, with one million tokens as an optional upgrade.

Cherny explained that larger contexts have become common because users are "pulling in a large number of skills, or running many agents or background automations." In other words, Anthropic encouraged users to adopt high-context workflows, then changed the caching economics underneath them.

Quality Concerns Mount

The cache chaos isn't happening in isolation. Users report that Claude Code's performance has degraded significantly since late March. An enterprise team plan user described sessions where they "maxed out session usage under 2 hours" with the AI getting "stuck in overthinking loops, multiple turns of realising the same thing, dozens of paragraphs of 'but wait, actually I need to do x' with slight variations."

This aligns with reports from AMD's AI director Stella Laurenzo, who published data showing Claude Code became "dumber and lazier" after March 8. Her analysis of 6,852 Claude Code sessions found that "stop-hook violations"—indicators of laziness and premature task cessation—skyrocketed from zero to an average of 10 per day.

The confluence of shorter cache TTLs, degraded model performance, and rising costs has created a perfect storm. Users who once championed Claude Code as the best AI coding assistant are now actively seeking alternatives.

The Transparency Problem

Perhaps most frustrating for users is the lack of transparency. Anthropic made significant changes to core economics without clear communication. The February 1 introduction of one-hour caching went largely unnoticed. The March 7 reversion to five minutes happened quietly. Users only discovered the change through careful analysis of their suddenly depleted quotas.

There's no user-facing setting to control cache behavior. No notification when cache expires. No clear documentation of how TTL decisions get made. Developers are left guessing why their costs ballooned and whether they can do anything about it.

The focus on cache optimization may also mask a deeper issue: Anthropic's quotas might simply be buying less processing time than before. If the company is struggling with compute costs or capacity constraints, shortening cache TTLs while denying any degradation in service value would be a convenient way to maintain margins.

🔥 Hot Take: Anthropic Is Gaslighting Its Users

Look, I get it. Running AI services at scale is expensive. Compute costs are real. But there's a right way and a wrong way to handle economic pressure—and Anthropic is doing it the wrong way.

The company essentially ran an unannounced pricing experiment on its most loyal users. They tested a one-hour cache, apparently didn't like the economics, switched back to five minutes, and hoped nobody would notice. When users did notice—and complained loudly—the response was condescending technobabble about how this is actually better for them.

Jarred Sumner's explanation might be mathematically correct for specific edge cases, but it's insulting to the broader user base. Telling developers who've seen their $200 monthly subscriptions become inadequate that "actually this is cheaper" when their experience proves the opposite is gaslighting, pure and simple.

The context window situation makes it worse. Anthropic aggressively marketed million-token contexts as a competitive advantage. They encouraged users to build workflows around massive context windows. Then they changed the caching rules so those workflows became economically unsustainable. It's like selling someone a Ferrari and then revealing that gas now costs $50 per gallon—but only for Ferrari owners.

And let's talk about the quality degradation happening simultaneously. Users aren't just paying more; they're getting less. The same subscription that bought excellent performance in February now buys degraded service with overthinking loops and lazy execution. If Anthropic is cutting costs on the backend—whether through model changes, compute allocation, or caching strategies—they owe users honesty about it.

The five-minute cache TTL might be defensible if Anthropic were transparent about it, offered user controls, and adjusted pricing accordingly. Instead, we got silent changes, denials that anything is wrong, and suggestions that users are simply misunderstanding their own experience.

Here's the reality: Claude Code was a great product. It may still be a good product. But Anthropic is burning user trust at exactly the moment when competition is heating up. OpenAI's Codex is improving rapidly. GitHub Copilot continues to dominate market share. Cursor and other alternatives are gaining traction. Users have options—and they're starting to exercise them.

Anthropic needs to choose: be the premium, user-friendly alternative that justifies its higher costs, or become just another AI company nickel-and-diming its customers while gaslighting them about the experience. Right now, they're heading firmly toward the latter—and "Praise Claude" isn't exactly rolling off anyone's tongue.

Cache chaos isn't just a technical problem. It's a trust problem. And Anthropic is failing.